By taking the absolute values, MAE is able to deal with the outliers better than MSE/RMSE. Therefore, considering absolute value of the difference is important. If we don’t take the absolute values then upon summation the negative difference will cancel out the positive difference and we will be left with a zero. It is similar to MSE in the sense that here also we take the difference between the predicted and the actual value and divide it with the number of values, however, instead of squaring this difference, we take an absolute value of it. This provides us with an output which is a ratio where if the output is bigger than 1 then this indicates that the model created by us is not even as good as a model which simply predicts the mean as the prediction for each observation. the line of best fit is simply the mean of the Y variable. Here we divide the MSE of our model with the MSE of a model which uses the mean as the predictor i.e. We can use this method to solve one drawback of MSE which is its sensitivity to the mean and scale of predictions.

The formula for Relative Mean Square Error is It can be used to compare between models whose errors are measured in different units. Thus, the MSE is highly dependent and sensitive towards the mean and the scale. Therefore, for MSE, the baseline is the mean.īelow when the pattern is correct and the mean is wrong, the MSE will have a very low outcome failing us to provide with the information that the pattern was predicted correctly. For example in the above example, if our line of best fit is simply the mean of the actual values then our MSE will be significantly lower than a line of best fit which gets the tendency right but misses the mean. if our model gets the correct mean, then it reduces the MSE significantly even when the pattern is completely missing. This happens especially when the metric to calculate the error is MSE.Īnother drawback of these measures is that unlike Correlation Coefficients, they are sensitive to the mean and scale of predictions i.e. However, if we introduce an outlier, the model will try to accommodate the outlier and in order to do so it will produce a different line of best fit and this will cause the results to be skewed. The points that form the line will be the predictions and the distance from the actual data points to the predicted data point is the error. We fit a regression model on it and get a line of best fit and the following graph. For example a dataset with 11 observations where we have a dependent variable: ‘Stress Level’ and an independent variable ‘Results’. This problem becomes evident when we have outliers in our data. Thus, the drawback of MSE is that for it, a single big error will have the same effect what a lot of small errors will have, which happens because as we square the error in the formula, the single big error made by the Model-Two has the same impact as the 10 small errors made by Model-One. However, if we look in depth then we find that for the first dataset, the predictions had an error of $1 for all 10 observations while for the second dataset, 9 out of 10 observations were predicted correctly while for the last observation the prediction was off by $4.16.

Let’s understand this with an example- We have a dataset with 10 observations and we apply two different classification models on them and from both the models we get an MSE of 1. One drawback of MSE/RMSE is that it is sensitive to outliers and thus outliers must be removed for MSE/RMSE to function properly. RMSE is commonly used when selecting features as RMSE is calculated with different combination of features to see if a feature is significantly improving the model’s prediction or not. RMSE functions on the assumption that the errors are unbiased and follows a normal distribution. RMSE is a popular measure to evaluate regression models as it is easy to understand.

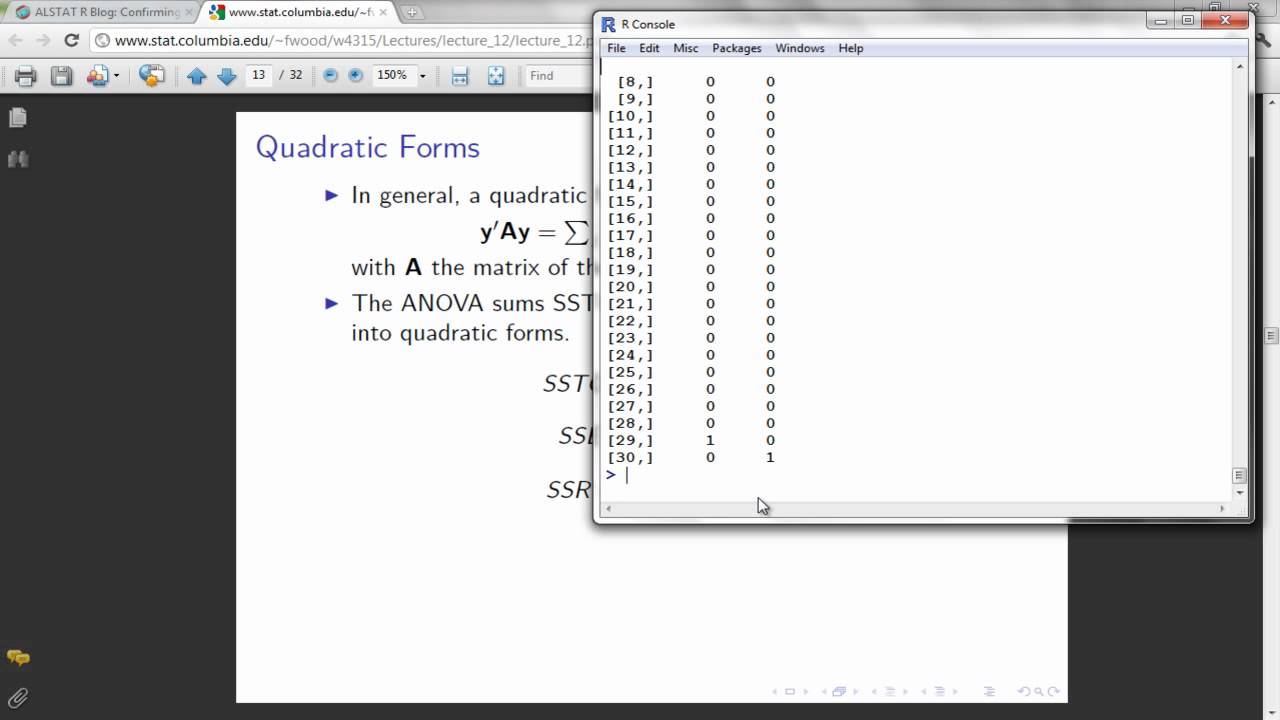

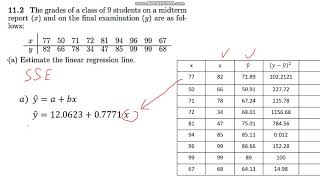

A Higher RMSE indicates that there are large deviations between the predicted and actual value. We want the value of RMSE to be as low as possible, as lower the RMSE value is, the better the model is with its predictions. If we divide this value by the number of observations then we get Mean Square Error.īy taking a square root of MSE, we get the Root Mean Square Error. To find the Sum Squared Error, we first take the difference between the true and predicted value and we square it and after summing them all up we get Sum Squared Error. It is the most common way of evaluating a regression model. Sum Squared Error (SSE), Mean Square Error(MSE) and Root Mean Square Error (RMSE)

Free excel download sse ssr sst calculator serial#

9.2 Serial Correlation/ Auto-Correlation.9 Other Problems faced during Regression Modeling.1 Sum Squared Error (SSE), Mean Square Error(MSE) and Root Mean Square Error (RMSE).